Introduction

Enterprises need visibility into how models are forming making predictions. Mission-critical decisions cannot be made using a black box. Teams need to understand and explain how their model is making predictionsmodel outputs. One such method is by understanding how each of the input features is impacting the outcome.

ModelOp Center provides a framework for calculating, tracking, and visualizing both Model Interpretability metrics. You can define how each Each of these is can be determined on a model-by-model casebasis. You can also enforce a standard using an MLC Process as needed. The subsequent sections provide more detail on how to use ModelOp Center to implement Interpretability into your ModelOps program.

Interpretability

While model interpretability is a complex and rapidly-changing subject, especially for AI models, ModelOp Center can assist you in understanding and calculating how much each feature contributes to the output of each individual prediction. In other words, these metrics show the impact of each feature on each prediction, as well as monitoring each feature’s contribution over time. ModelOp Center does this by leveraging SHAP to automatically compute the feature importance metricsexpecting a trained SHAP explainer artifact and finding the SHAP values over the input dataset. The SHAP results are persisted with the Test Results for the model for auditability . Note: not all models are easily interpretable, and as a result, model interpretability can be used as a factor for deciding the approach and choice of a modeland tracking over time.

The following example uses the SHAP library to calculate the impact of the features on the prediction. It emits , calculates the average SHAP value for each feature and , then yields it as a dictionary.

| Code Block | ||

|---|---|---|

| ||

import pandas as pd

import numpy as np

import shap

import pickle

# modelop.init

def begin():

global explainer, lr_model, threshold, features

model_artifacts = pickle.load(open("model_artifacts.pkl", "rb"))

explainer = model_artifacts['explainer']

lr_model = model_artifacts['lr_model']

threshold = model_artifacts['threshold']

features = model_artifacts['features']

pass

def preprocess(data):

prep_data = pd.DataFrame(index=data.index)

prep_data["logit_int_rate"] = data.int_rate.apply(logit)

prep_data["log_annual_inc"] = data.annual_inc.apply(np.log)

prep_data["log_credit_age"] = data.credit_age.apply(np.log)

prep_data["log_loan_amnt"] = data.loan_amnt.apply(np.log)

prep_data["rent_indicator"] = data.home_ownership.isin(['RENT']).astype(int)

return prep_data

def prediction(data):

return lr_model.predict_proba(data.loc[:, features])[:,1]

def get_shap_values(data):

shap_values = explainer.shap_values(data.loc[:, features])

shap_values = np.mean(abs(shap_values), axis=0).tolist()

shap_values = dict(zip(features, shap_values))

sorted_shap_values = {

k: v for k, v in sorted(shap_values.items(), key=lambda x: x[1])

}

return sorted_shap_values

# modelop.metrics

def metrics(data):

metrics = {}

prep_data = preprocess(data)

data = pd.concat([data, prep_data], axis=1)

data.loc[:, 'probabilities'] = prediction(data)

data.loc[:, 'predictions'] = data.probabilities \

.apply(lambda x: threshold > x) \

.astype(int)

metrics['shap'] = get_shap_values(data)

yield metrics

def get_shap_values(data):

shap_values = explainer.shap_values(data.loc[:, features])

shap_values = np.mean(abs(shap_values), axis=0).tolist()

shap_values = dict(zip(features, shap_values))

sorted_shap_values = {

k: v for k, v in sorted(shap_values.items(), key=lambda x: x[1])

}

return sorted_shap_values |

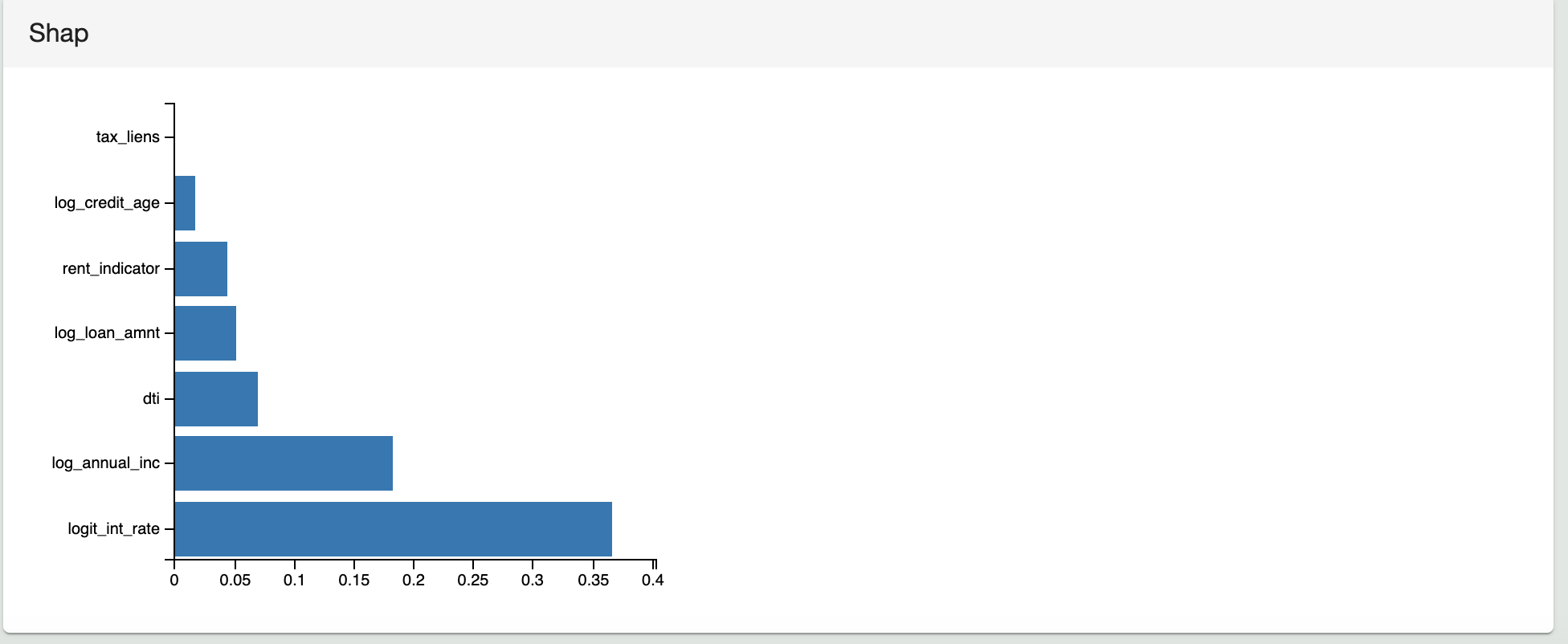

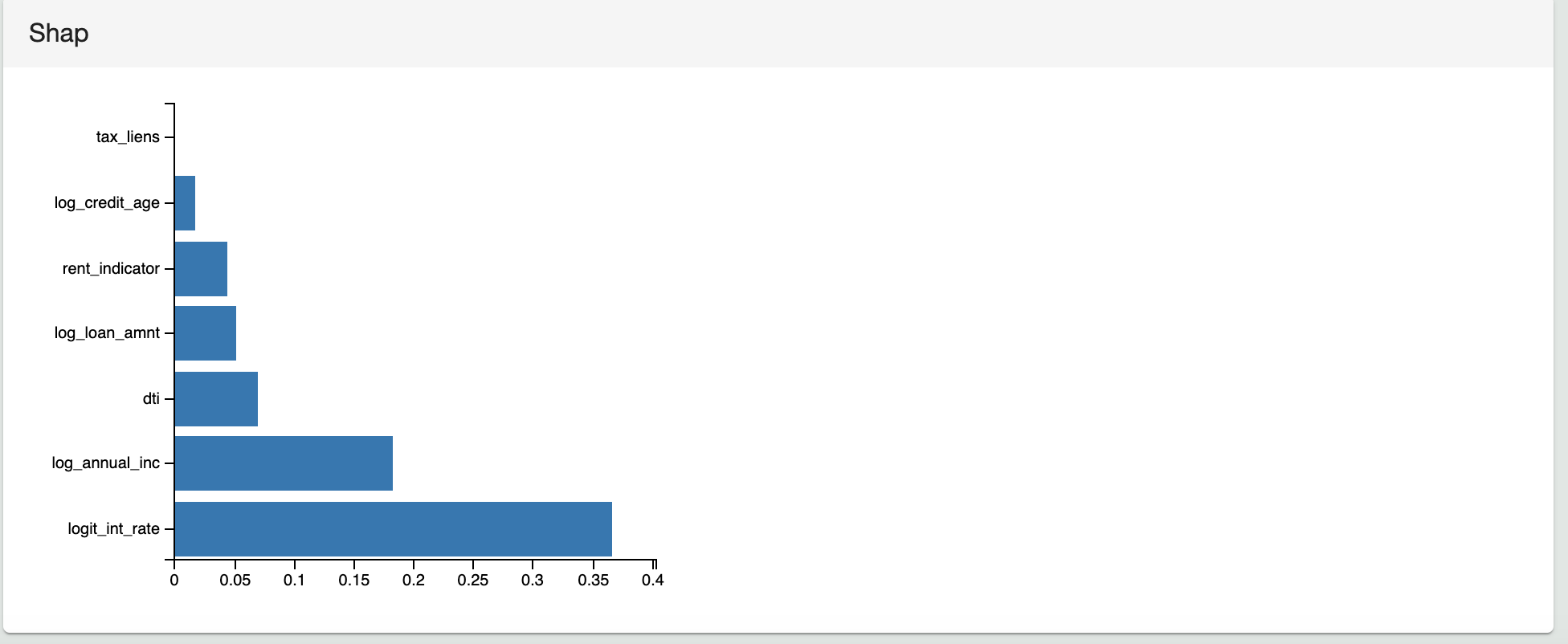

The following image shows the corresponding visualization for the SHAP values of the sample model to the Test Results in ModelOp Center.

Related Articles

Next Article: Model Governance: Model Versioning >